How to Optimize for Multimodal AI Search When Google Lens Processes 12 Billion Visual Queries Monthly and Your Text-Only Strategy Is Leaving Revenue on the Table

How to Optimize for Multimodal AI Search When Google Lens Processes 12 Billion Visual Queries Monthly and Your Text-Only Strategy Is Leaving Revenue on the Table

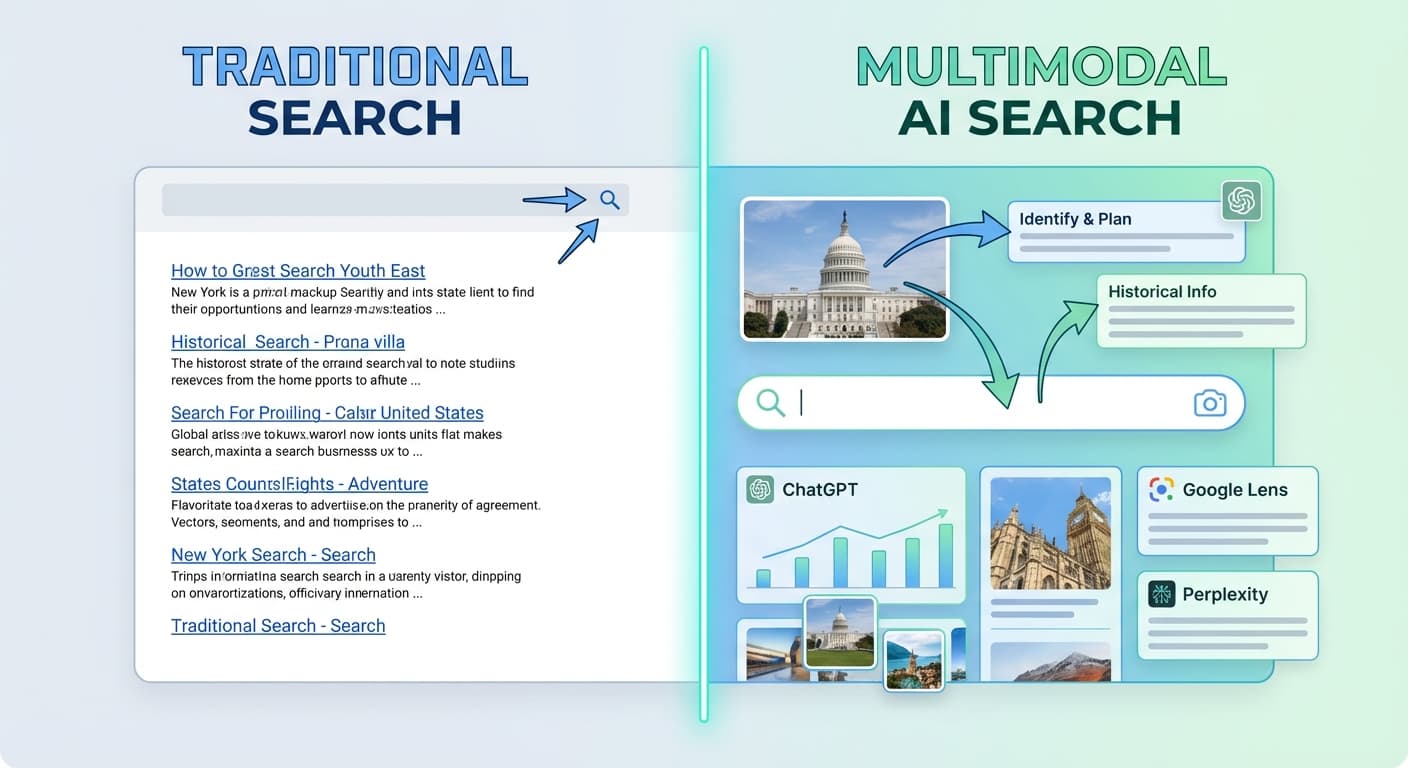

Google Lens now processes over 12 billion visual queries monthly in 2026, while ChatGPT's GPT-4V and Gemini's multimodal capabilities have fundamentally changed how people search for information. Yet 78% of content creators are still optimizing only for text-based queries, missing massive revenue opportunities in visual and multimodal search.

If your content strategy doesn't account for how AI systems now interpret images, videos, and text together, you're competing with one hand tied behind your back. Here's how to fix that.

The Multimodal Search Revolution: Why 2026 Changed Everything

The numbers tell the story:

But here's what most marketers miss: it's not just about having images. It's about creating content that AI systems can understand across multiple formats simultaneously.

When someone asks ChatGPT "What's the best laptop for video editing?" while uploading a photo of their current setup, or when they use Google Lens to identify a product and ask follow-up questions, AI systems are processing visual and textual context together.

Understanding How Multimodal AI Actually Works

The Three Pillars of Multimodal Understanding

1. Visual Context Recognition

AI systems now analyze:

2. Semantic Bridge Building

AI creates connections between:

3. Intent Interpretation

Multimodal AI considers:

The Five-Step Multimodal Optimization Framework

Step 1: Audit Your Visual Content Strategy

Start by analyzing your current visual assets:

Content Inventory Checklist:

AI-Readiness Assessment:

Step 2: Create AI-Interpretable Visual Content

Image Optimization Best Practices:

Visual Hierarchy for AI:

Step 3: Optimize for Cross-Platform Visual Search

Platform-Specific Considerations:

Google Lens Optimization:

ChatGPT Vision Integration:

Perplexity and Claude Optimization:

Step 4: Implement Semantic Visual-Text Alignment

The key to multimodal success is ensuring your visual and textual content work together seamlessly:

Content Synchronization Strategies:

Tools like Citescope Ai can help analyze how well your content performs across these multimodal dimensions by examining your content's AI Interpretability score, which includes visual-text alignment factors.

Step 5: Monitor and Measure Multimodal Performance

Key Metrics to Track:

Advanced Multimodal Strategies for 2026

Video Content Optimization

With AI systems now processing video content more effectively:

Interactive Visual Elements

AI systems increasingly recognize and value interactive content:

Seasonal and Trending Visual Optimization

Stay ahead of visual search trends by:

Common Multimodal Optimization Mistakes to Avoid

The "Pretty Pictures" Trap

Adding irrelevant stock photos doesn't improve multimodal performance. Every visual element should serve a specific purpose and add genuine value.

Over-Optimization

Stuffing keywords into alt text and file names without considering user experience can backfire. AI systems now detect and penalize obvious over-optimization.

Platform Inconsistency

Using different visual styles across platforms confuses AI systems and weakens brand recognition. Maintain visual consistency while adapting to platform requirements.

Ignoring Mobile Visual Search

With 85% of visual searches happening on mobile devices, desktop-only optimization strategies miss the majority of opportunities.

How Citescope Ai Helps with Multimodal Optimization

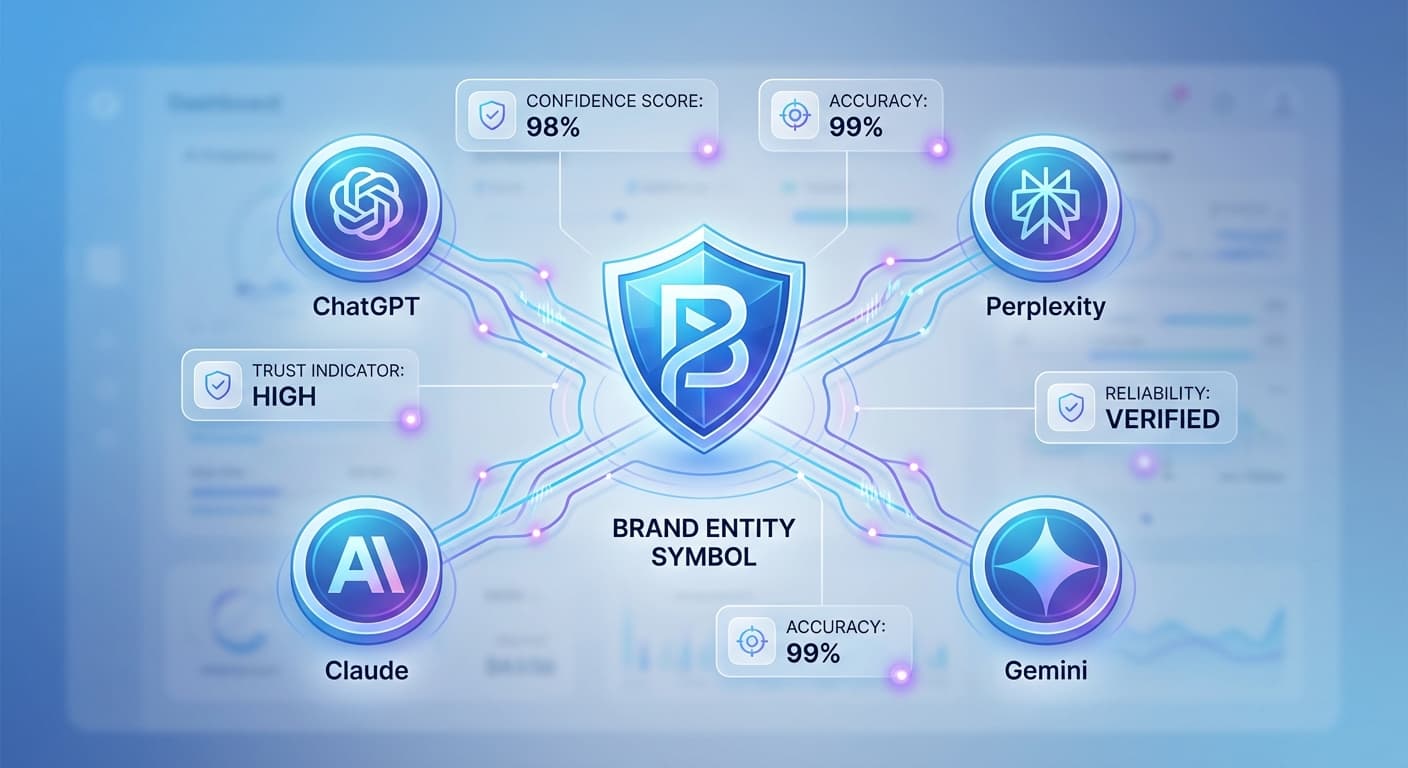

While implementing a comprehensive multimodal strategy can seem overwhelming, Citescope Ai's GEO Score includes analysis of how well your content performs across multiple AI interpretation dimensions, including visual-text alignment and semantic richness.

Our AI Rewriter doesn't just optimize text—it provides recommendations for visual content structure and suggests improvements for multimodal AI visibility. The Citation Tracker also monitors when AI systems reference your content in multimodal contexts, giving you insights into which visual-text combinations perform best.

With multi-format export capabilities, you can easily implement optimized content across different platforms while maintaining consistency in your multimodal approach.

The ROI of Multimodal Optimization

Companies implementing comprehensive multimodal strategies in 2026 report:

The investment in multimodal optimization pays dividends across multiple channels, from traditional search to AI-powered discovery systems.

Ready to Optimize for AI Search?

Multimodal AI search isn't the future—it's happening right now. While your competitors focus solely on text optimization, you can capture the growing visual and multimodal search market.

Citescope Ai makes it easy to optimize your content for both traditional and AI search engines with our comprehensive GEO Score analysis, AI-powered content rewriter, and citation tracking across ChatGPT, Perplexity, Claude, and Gemini. Start with our free tier (3 optimizations per month) and see how multimodal optimization can transform your content performance.

[Start optimizing for multimodal AI search with Citescope Ai's free trial today →]