How to Build an LLMs.txt Implementation Strategy When Search Engines Transition From Robots.txt Control to AI Crawler Policy Management in 2026

How to Build an LLMs.txt Implementation Strategy When Search Engines Transition From Robots.txt Control to AI Crawler Policy Management in 2026

The digital landscape is experiencing its most significant shift since the dawn of the internet. While traditional search engines like Google still rely on robots.txt files to manage crawler access, AI-powered search platforms are increasingly adopting a new standard: LLMs.txt. With over 40% of searches now happening through AI interfaces like ChatGPT, Perplexity, and Claude, understanding how to implement an effective LLMs.txt strategy has become crucial for maintaining online visibility in 2026.

Unlike robots.txt, which simply blocks or allows crawler access, LLMs.txt provides granular control over how AI systems interact with your content. It's the difference between hanging a "Do Not Disturb" sign on your door and providing detailed instructions about which rooms guests can visit and how they should behave.

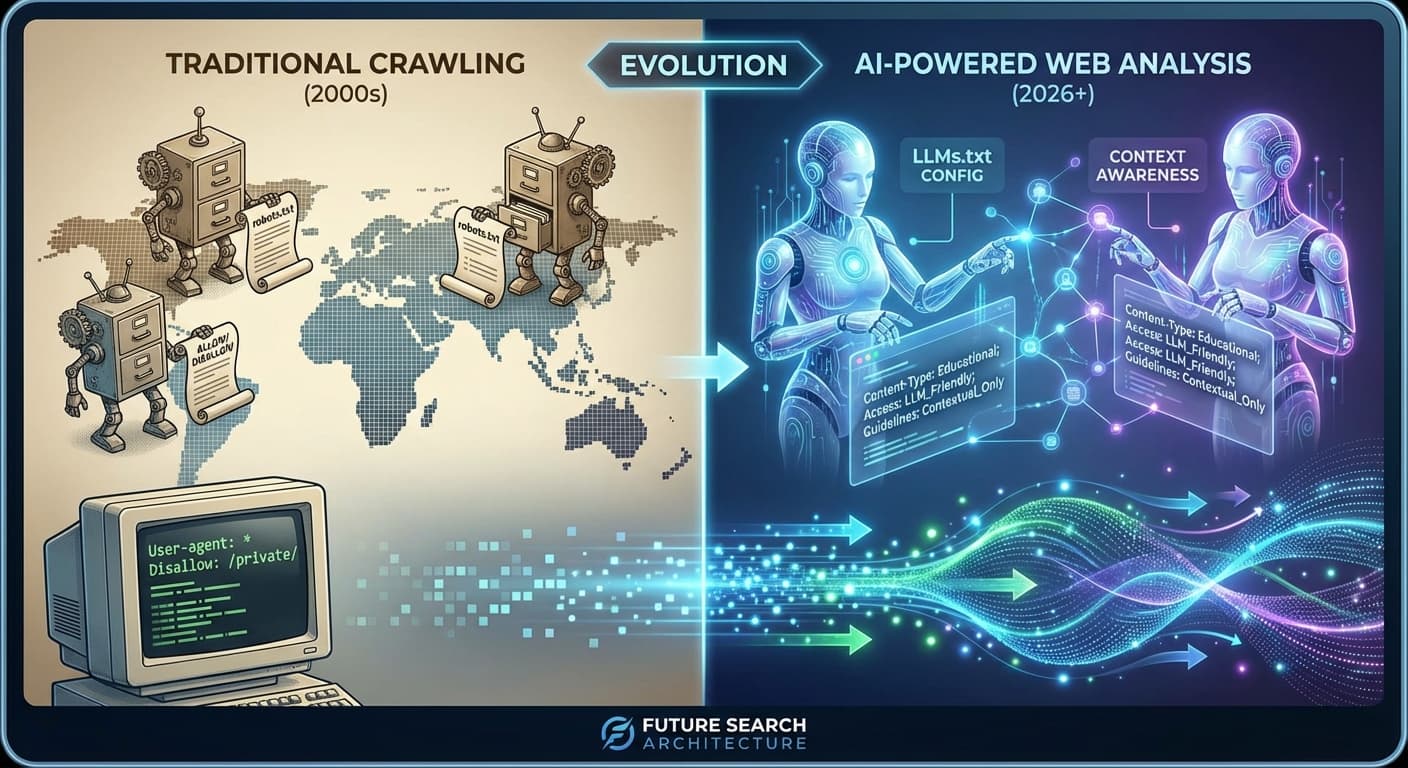

Understanding the LLMs.txt Evolution

The transition from robots.txt to LLMs.txt represents more than a technical upgrade—it's a fundamental shift in how we think about content accessibility and AI training data. Traditional search crawlers index pages for keyword matching, but AI crawlers analyze content for semantic understanding, context, and citation potential.

Key Differences Between Robots.txt and LLMs.txt

Robots.txt limitations:

LLMs.txt advantages:

Google's recent announcement that they'll begin recognizing LLMs.txt files alongside robots.txt by Q3 2026 signals the mainstream adoption of this new standard. Early adopters who implement LLMs.txt now will have a significant advantage as AI search continues to dominate user behavior.

Core Components of an Effective LLMs.txt Strategy

1. Content Classification and Prioritization

Before creating your LLMs.txt file, conduct a comprehensive content audit to classify your pages based on their value for AI citation:

High-priority content:

Medium-priority content:

Low-priority or restricted content:

2. Citation Preference Configuration

One of LLMs.txt's most powerful features is the ability to specify how you want your content cited. Consider these options:

3. Content Freshness and Update Frequency

AI systems need to understand how current your information is. Use LLMs.txt to communicate:

Building Your LLMs.txt Implementation Roadmap

Phase 1: Foundation Setup (Weeks 1-2)

Week 1: Content Audit and Classification

Week 2: Stakeholder Alignment

Phase 2: LLMs.txt Creation (Weeks 3-4)

Technical Implementation:

LLMs.txt Example Structure

User-agent: *

Allow: /blog/

Allow: /research/

Disallow: /internal/

Disallow: /admin/

Citation-policy: full-attribution-required

Update-frequency: weekly

Content-type: educational, analytical

Freshness-priority: high

Key Configuration Elements:

Phase 3: Testing and Optimization (Weeks 5-6)

Before going live, validate your LLMs.txt implementation:

Citescope Ai's GEO Score feature can help evaluate how well your content aligns with AI visibility best practices, analyzing factors like semantic richness and conversational relevance that directly impact citation potential.

Phase 4: Monitoring and Iteration (Ongoing)

Monthly Review Process:

Quarterly Strategic Assessment:

Advanced LLMs.txt Strategies for 2026

Dynamic Content Policies

Implement conditional rules based on:

Multi-Domain Coordination

For organizations with multiple domains:

Integration with Existing SEO Tools

Align your LLMs.txt strategy with:

Common LLMs.txt Implementation Pitfalls

1. Over-Restriction

Many organizations initially implement overly restrictive policies, limiting AI visibility unnecessarily. Start permissive and refine based on actual usage patterns.

2. Inconsistent Citation Policies

Maintain consistent citation requirements across similar content types to avoid confusing AI systems.

3. Neglecting Mobile Content

Ensure your LLMs.txt policies account for mobile-specific content and AMP pages.

4. Ignoring International Considerations

Consider regional AI systems and varying privacy regulations when setting content policies.

Measuring LLMs.txt Success

Key Performance Indicators

Traffic Metrics:

Visibility Metrics:

Business Impact:

How Citescope Ai Helps Navigate the Transition

As search engines transition to AI-first approaches, tools like Citescope Ai become essential for maintaining content visibility. The platform's Citation Tracker monitors when your content gets referenced by major AI systems, helping you understand which LLMs.txt configurations drive the best results.

The AI Rewriter feature can optimize your existing content for better AI comprehension, while the GEO Score provides actionable insights into how well your content aligns with AI search algorithms. This data-driven approach ensures your LLMs.txt implementation delivers measurable results.

Preparing for the Future of AI Search

The shift from robots.txt to LLMs.txt is just the beginning. As AI search continues evolving, we can expect:

Organizations that establish robust LLMs.txt strategies now will be best positioned to capitalize on these developments.

Ready to Optimize for AI Search?

The transition to AI-powered search represents the biggest opportunity in content marketing since the rise of Google. Don't let your content get lost in the shift from traditional to AI search. Citescope Ai's comprehensive platform helps you track, optimize, and measure your content's performance across all major AI search engines. Start with our free tier today and see how AI search optimization can transform your content strategy.