How to Build an AI Bot Quality Score Framework: From 47 Crawlers to 8 Citation Drivers

How to Build an AI Bot Quality Score Framework: From 47 Crawlers to 8 Citation Drivers

Here's a shocking reality check: Your content is being crawled by dozens of AI agents daily, but only a handful actually drive meaningful citations. In 2025, content creators face a paradox—while 47+ different LLM agents might be indexing their content, research shows that just 8 core AI systems generate 94% of all content citations.

This disparity creates a critical challenge: How do you optimize for quality over quantity when it comes to AI visibility?

The Hidden Reality of AI Content Crawling

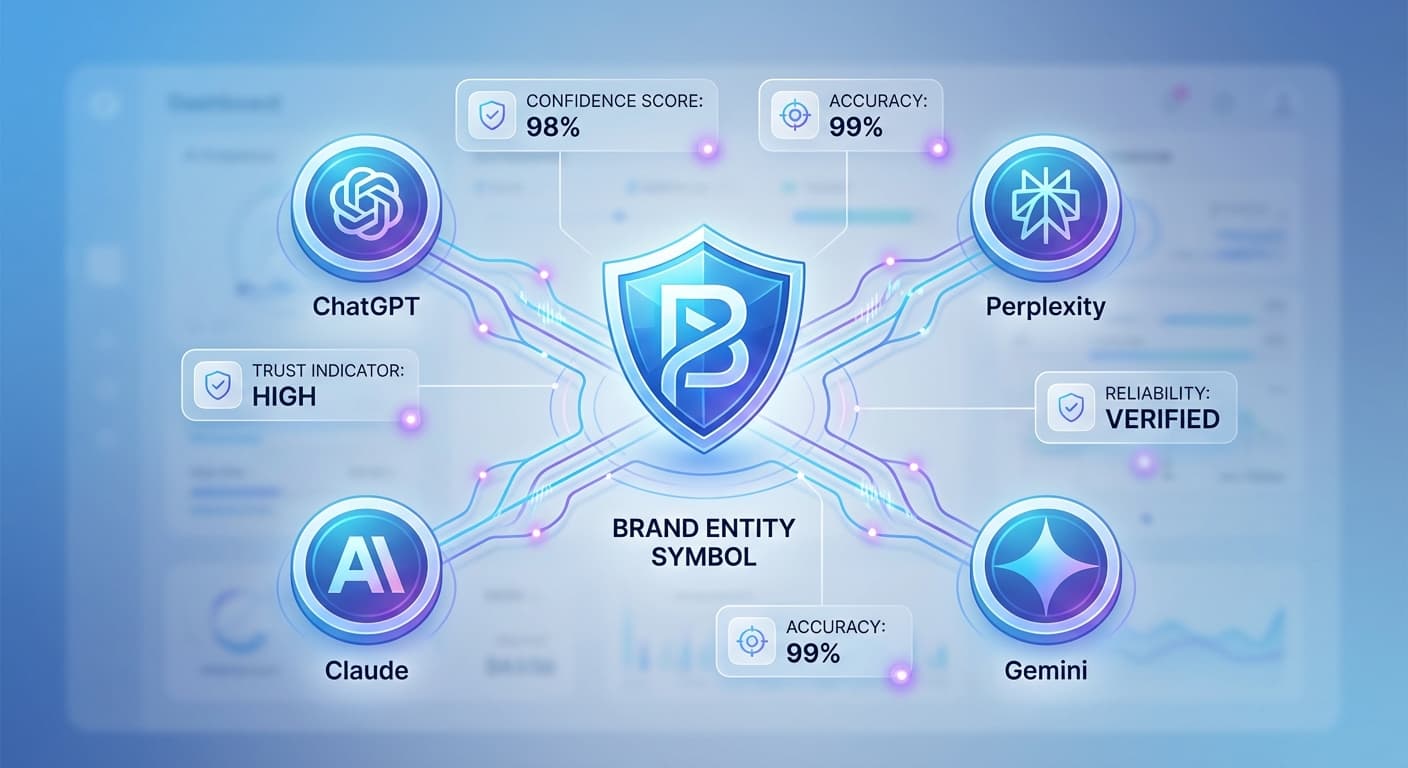

The AI search landscape has exploded beyond the big four (ChatGPT, Perplexity, Claude, and Gemini). Today's ecosystem includes:

Yet here's the kicker: Despite this massive crawling activity, only 8 AI systems consistently generate citations that drive actual traffic and authority. These citation drivers are:

Why Most AI Crawlers Don't Convert to Citations

Understanding this gap is crucial for building an effective quality score framework. Here's why most crawlers fail to generate meaningful citations:

Crawling vs. Citing Behavior

Many AI agents crawl content for training or indexing purposes but lack the sophisticated retrieval mechanisms needed for real-time citation generation. They're essentially digital librarians who catalog books but never recommend them.

Quality Thresholds

The top 8 citation drivers have implemented strict quality filters. Content must meet specific criteria:

Technical Infrastructure

Not all AI systems have invested in the complex infrastructure required for real-time content retrieval and citation formatting. Building this capability requires significant computational resources that many smaller players lack.

Building Your AI Bot Quality Score Framework

To maximize your citation potential, you need a systematic approach that focuses on the AI systems that actually matter. Here's how to build a framework that delivers results:

Step 1: Identify Your Citation-Driving Segments

Not all content types perform equally across AI platforms. Analyze your existing content to identify patterns:

High-Citation Content Types:

Low-Citation Content Types:

Step 2: Develop Your Quality Scoring Matrix

Create a weighted scoring system based on the factors that matter most to citation-driving AI systems:

Authority Signals (25% weight):

Content Quality (30% weight):

AI Optimization (25% weight):

Freshness and Relevance (20% weight):

Step 3: Implement Tracking Mechanisms

You can't improve what you don't measure. Set up monitoring for:

Tools like Citescope Ai's Citation Tracker can automate much of this monitoring, providing real-time insights into when and how your content gets cited across ChatGPT, Perplexity, Claude, and Gemini.

Step 4: Optimize Based on Performance Data

Use your quality scores to prioritize optimization efforts:

High-scoring, low-citation content: May need better AI discoverability

Low-scoring, high-citation content: Indicates untapped optimization potential

High-scoring, high-citation content: Your benchmark for future content

Low-scoring, low-citation content: Candidates for major revision or retirement

Advanced Framework Strategies

Persona-Based Optimization

Different AI systems have distinct "personalities" and citation preferences:

Seasonal Quality Adjustments

AI citation patterns fluctuate based on:

Build flexibility into your framework to account for these variations.

Cross-Platform Syndication Strategy

While focusing on the top 8 citation drivers, don't completely ignore the other 39+ crawlers. They may not drive citations today, but the AI landscape evolves rapidly. Consider a tiered approach:

Common Framework Pitfalls to Avoid

Over-Optimization Syndrome

Trying to optimize for all 47+ AI agents simultaneously often results in generic, watered-down content that appeals to none. Focus your efforts where they'll have the biggest impact.

Ignoring Human Readers

Remember that AI systems are trained on human-preferred content. If humans don't find your content valuable, AI systems won't either.

Static Framework Thinking

The AI landscape changes rapidly. What works today may not work in six months. Build adaptability into your framework from day one.

Metric Vanity

Not all citations are created equal. A single citation from ChatGPT in response to a high-commercial-intent query is worth more than dozens of citations from lesser-known AI platforms.

How Citescope Ai Helps

Building and maintaining an AI bot quality score framework manually is time-consuming and error-prone. Citescope Ai streamlines this process with:

GEO Score Analysis: Automatically evaluates your content across the five critical dimensions (AI Interpretability, Semantic Richness, Conversational Relevance, Structure, Authority) that drive citations from the top AI platforms.

AI Rewriter Optimization: One-click content restructuring that aligns with the specific preferences of ChatGPT, Perplexity, Claude, and Gemini, focusing your efforts on the platforms that actually drive citations.

Citation Tracking: Real-time monitoring of when and how your content gets cited across the 8 core AI systems, providing the data you need to refine your quality framework.

Multi-Format Export: Seamlessly distribute optimized content across different platforms and content management systems to maximize your reach within the citation-driving ecosystem.

Ready to Optimize for AI Search?

Stop wasting time optimizing for AI crawlers that don't drive citations. Focus your efforts on the 8 platforms that actually matter, and build a quality score framework that delivers measurable results. Citescope Ai provides the tools and insights you need to track what's working, optimize what isn't, and stay ahead of the rapidly evolving AI search landscape. Start with our free tier today and see which of your content pieces are truly resonating with the AI systems that drive traffic and authority.