How to Build a Hallucination Liability Protection Framework When AI Search Engines Incorrectly Associate Your Brand with Competitor Product Defects and Safety Recalls

How to Build a Hallucination Liability Protection Framework When AI Search Engines Incorrectly Associate Your Brand with Competitor Product Defects and Safety Recalls

In late 2025, a major automotive brand discovered that ChatGPT was incorrectly citing their vehicles in responses about a competitor's airbag recall—a hallucination that cost them an estimated $2.3 million in lost sales over three months. With AI search engines now handling over 30% of all search queries and serving 500+ million users weekly, AI hallucinations have evolved from a technical curiosity to a genuine business liability.

As AI systems become the primary source of information for consumers, protecting your brand from false associations with competitor defects, recalls, and safety issues isn't just a reputation management concern—it's a critical business imperative that requires a systematic approach.

Understanding AI Hallucination Risks in 2026

AI hallucinations occur when language models generate confident-sounding but factually incorrect responses. In the context of brand protection, these manifestations are particularly dangerous:

Recent studies show that 23% of AI-generated responses contain some form of factual error, with brand-related hallucinations increasing by 47% throughout 2025 as AI systems struggle to distinguish between similar companies and products.

The Business Impact of AI Hallucinations

The consequences of unchecked AI hallucinations extend far beyond momentary confusion:

Financial Implications

Reputation Damage

Building Your Protection Framework

Phase 1: Risk Assessment and Monitoring

Identify Vulnerability Points

Implement Continuous Monitoring

Set up systematic monitoring across all major AI platforms:

Query variations should include:

Phase 2: Content Fortification Strategy

Create Authoritative Safety Documentation

Develop comprehensive, AI-optimized content that clearly establishes your safety record:

Optimize Content Structure for AI Comprehension

AI systems favor clearly structured, factual content. Format your safety documentation with:

Phase 3: Proactive Correction Protocols

Rapid Response System

When hallucinations are detected:

Platform-Specific Correction Strategies

While working on manual corrections, tools like Citescope AI can help ensure your authoritative content is properly structured and optimized to be cited by AI systems, reducing the likelihood of hallucinations occurring in the first place.

Phase 4: Legal and PR Preparedness

Legal Documentation Framework

Public Relations Strategy

Advanced Protection Techniques

Semantic Disambiguation

Use advanced content strategies to help AI systems distinguish your brand:

Authority Building

Strengthen your brand's authority signals:

Technical Implementation

Measuring Framework Effectiveness

Track key performance indicators:

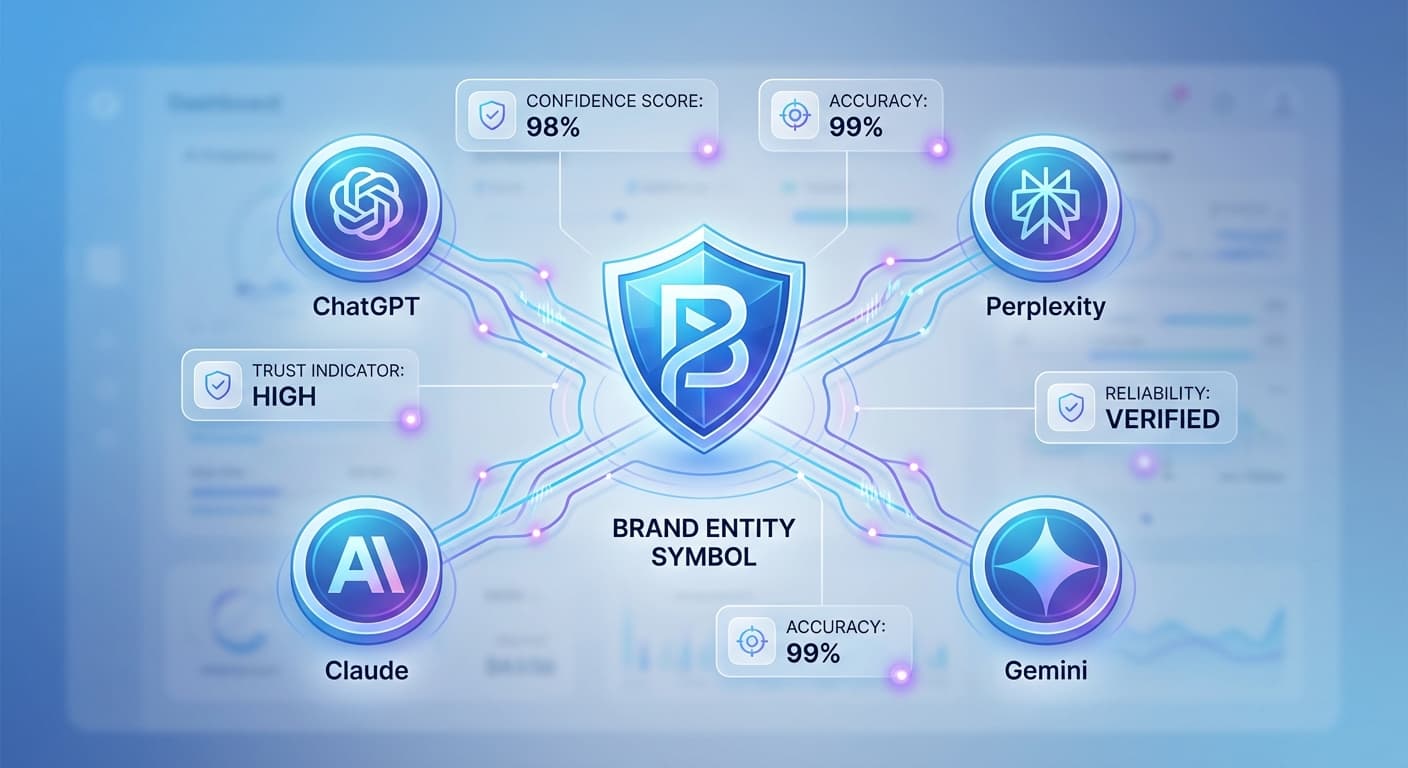

How Citescope AI Helps Protect Your Brand

Citescope AI's comprehensive platform addresses multiple aspects of hallucination protection:

GEO Score Analysis evaluates your safety content across five critical dimensions, ensuring maximum AI comprehension and proper attribution. The tool identifies structural weaknesses that could lead to misinterpretation.

Citation Tracker monitors when your content gets cited by major AI engines, helping you verify that your authoritative safety information is being properly referenced instead of competitor data.

AI Rewriter optimizes your safety documentation for better AI visibility, restructuring content to minimize ambiguity and reduce hallucination risk.

Multi-format Export ensures your optimized safety content can be deployed across all platforms and content management systems quickly.

Building Long-term Resilience

As AI systems continue evolving, maintaining protection requires ongoing vigilance:

Ready to Optimize for AI Search?

Protecting your brand from AI hallucinations requires more than just monitoring—it demands strategically optimized content that AI systems can properly interpret and cite. Citescope AI provides the tools you need to create authoritative, AI-friendly content while tracking its performance across all major platforms.

Start with our free tier to analyze your current safety documentation, or upgrade to Pro for comprehensive citation tracking and optimization tools. Don't let AI hallucinations damage your brand reputation—take control of how AI systems understand and represent your company.