How to Build a Brand Safety Protection System When AI Search Engines Start Hallucinating False Product Recalls and Regulatory Violations That Tank Your Stock Price Before You Can Issue Corrections

How to Build a Brand Safety Protection System When AI Search Engines Start Hallucinating False Product Recalls and Regulatory Violations That Tank Your Stock Price Before You Can Issue Corrections

In March 2025, a major automotive manufacturer watched their stock price plummet 12% in pre-market trading after ChatGPT incorrectly told users their latest SUV model had been recalled for brake failures. The recall never existed. The AI had hallucinated the information by misinterpreting a safety study about similar vehicles from a different brand.

This isn't science fiction—it's the new reality of doing business in 2026. With AI search engines now handling over 35% of all search queries and processing 800+ million requests daily across platforms like ChatGPT, Perplexity, Claude, and Gemini, AI hallucinations about your brand can cause real financial damage before you even know they're happening.

The Growing Threat of AI Brand Hallucinations

AI hallucinations—when AI systems generate false or misleading information with apparent confidence—have evolved from amusing chatbot quirks to serious business threats. Recent data from the AI Safety Institute shows that even the most advanced language models still hallucinate factual information 15-20% of the time when discussing specific companies or products.

The problem is compounded by AI search engines' growing influence:

Real-World Consequences We've Seen in 2025-2026

Building Your Brand Safety Protection System

Step 1: Implement Real-Time AI Monitoring

Traditional brand monitoring tools weren't designed for AI search engines. You need specialized monitoring that can:

Set up alerts for critical keywords like:

Step 2: Create an Authoritative Content Foundation

AI systems need clear, structured, authoritative information about your brand to reference correctly. This means:

Optimize your official content for AI consumption:

Establish content authority signals:

Step 3: Build Rapid Response Protocols

When AI hallucinations occur, speed is everything. Your response protocol should include:

Immediate Response Team (0-2 hours):

Communication Strategy (2-6 hours):

Long-term Mitigation (6+ hours):

Step 4: Proactive Content Optimization

The best defense is ensuring AI systems have accurate information to reference:

Create AI-friendly fact sheets:

Optimize for accuracy over marketing:

Advanced Protection Strategies

Legal Preparedness

Work with legal counsel to:

Stakeholder Communication

For Public Companies:

For All Companies:

Technical Infrastructure

Invest in systems that can:

How Citescope Ai Helps Protect Your Brand

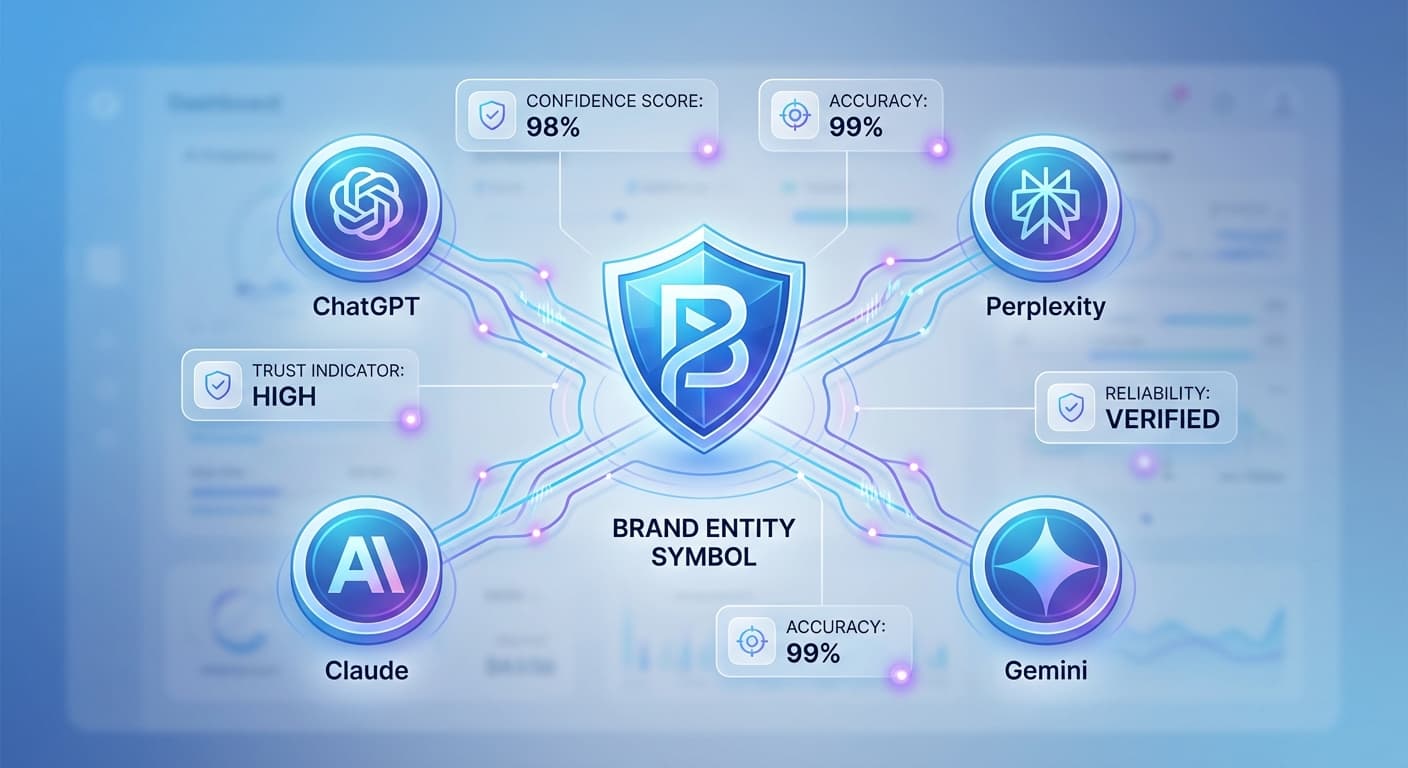

While building a comprehensive brand safety system requires multiple tools and strategies, Citescope Ai's Citation Tracker provides crucial real-time visibility into how AI search engines are referencing your brand. Our platform monitors citations across ChatGPT, Perplexity, Claude, and Gemini, helping you catch potential hallucinations early.

The GEO Score analysis also helps ensure your authoritative content is optimized for accurate AI interpretation, reducing the likelihood of misrepresentation in the first place. When AI systems can easily understand and accurately cite your content, they're less likely to hallucinate false information.

Measuring Success: Key Metrics to Track

The Future of AI Brand Safety

As AI systems become more sophisticated, we can expect:

However, the fundamental challenge remains: AI systems will continue to occasionally generate false information with confidence, and brands need robust protection systems to minimize damage.

Ready to Optimize for AI Search?

Protecting your brand in the age of AI search requires more than just monitoring—you need to ensure AI systems have accurate, well-structured information to reference. Citescope Ai helps you optimize your content for better AI understanding while tracking how your brand appears across major AI platforms. Start with our free tier and get 3 content optimizations to begin building your AI brand safety foundation. Try Citescope Ai today and take control of how AI represents your brand.